Popular DNS service provider CouldFlare went down today due to an issue with Flowspec protocol rule update in their edge Juniper router. Which took their whole system down for about an hour and almost all of their 785,000 customer (Including 4chan, Wikileaks, Metallica.com & our TheTechJournal) went down with it. CloudFlare tried to act quick, now everything is operational. CloudFlare CEO Matthew Prince explained the whole thing, keep reading to understand more about the issue.

What Happened With CloudFlare Today?

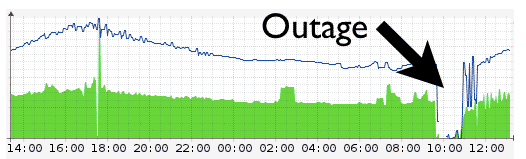

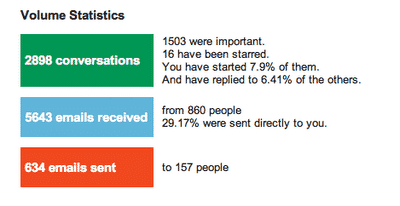

CloudFlare is not only a DNS service, but it also provide DNS level security, caching service, which helps website to fight with spam to DDoS attack, as well as serving content faster. Here at TTJ we use it as DNS service in some lever of our cloud system. So we are entirely depending on them. But the whole CloudFlare system went down today at around 9:47 UTC (1:47 AM in California). And all of the site its serving went down with it. The number of website use their service in some level is quite high. About 785,000 websites went down use to this issue. Due to the network-wide router fails in their edge network all of their 23 data center around the world was disconnected from internet. Some of the router came back online within few min but as all network traffic were directed to those available few, they also went down immediately after reboot. CloudFlare operation team tried to act fast and as their 23 data center is distributed in 14 countries it took them more 30 minute to get some one in all data center to manually hard reboot all router. Then they figured out what went wrong and which rule caused the issue and reverted the rule change. Within an hour whole network started to act in full power.

How All This Happened?

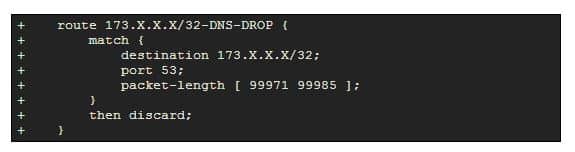

According to CloudFlare CEO Matthew Prince, it all started from a DDoS attack on one of their client’s site. They have a automated attack profiling system, which detected a very unusual size of data packets of between 99,971 and 99,985 bytes long, but the largest packets sent across the Internet are typically in the 1,500-byte range. And they had a limit of maximum packet size set to 4,470 on their entire network. So when the profiler distinguish the attack patter, their operation team wrote a rule like this below

Flowspec accepted the rule and relayed it to their edge network, and caused all Juniper router consume all their RAM until they crashed.

Overall:

All site seems running smoothly now. Most of the site came back online within an hour. As far in our experience we seen a downtime of around 15 minute for TheTechJournal. CloudFlare promised to look more deep into this matter so they could avoid this in future. They will be giving credit back to all their paid customer.

Now it all seems good, and they way CloudFlare reacted is nice. But still I personally feel DNS is a very important for internet, it should be more redundant. Most of the big names relies on service like CloudFlare, maybe we should have some sort of backup or DNS lever load balancing to avoid this type of incident.

[ttjad keyword=”cloud-storage-drive”]

It’s physically impossible to have IPv4 packets between 99,971 and 99,985 bytes, in fact anything over the 16-bit word limit of 65535 bytes is impossible. Their software that generates FlowSpec rules malfunctioned and they’re blaming the vendor.